Microsoft Official Courses (MOC)

Course DP-100T01-A: Designing and Implementing a Data Science Solution on Azure

За Курса (About this Course):

-

- Learn how to operate machine learning solutions at cloud scale using Azure Machine Learning. This course teaches you to leverage your existing knowledge of Python and machine learning to manage data ingestion and preparation, model training and deployment, and machine learning solution monitoring in Microsoft Azure.

Цели – Какво ще научите (Course Goals/Skills):

- Explain the evolving world of data

- Survey the services in the Azure Data Platform

- Identify the tasks that are performed by a Data Engineer

- Describe the use cases for the cloud in a Case Study

- Choose a data storage approach in Azure

- Create an Azure Storage Account

- Explain Azure Data Lake Storage

- Upload data into Azure Data Lake

- Explain Azure Databricks

- Work with Azure Databricks

- Read data with Azure Databricks

- Perform transformations with Azure Databricks

- Create an Azure Cosmos DB database built to scale

- Insert and query data in your Azure Cosmos DB database

- Build a .NET Core app for Azure Cosmos DB in Visual Studio Code

- Distribute data globally with Azure Cosmos DB

- Use Azure SQL Database

- Describe Azure Data Warehouse

- Create and Query an Azure SQL Data Warehouse

- Use PolyBase to Load Data into Azure SQL Data Warehouse

- Be able to explain data streams and event processing

- Understand Data Ingestion with Event Hubs

- Understand Processing Data with Stream Analytics Jobs

- Understand Azure Data Factory and Databricks

- Understand Azure Data Factory Components

- Be able to explain how Azure Data Factory works

- Have an introduction to security

- Understand key security components

- Understand securing Storage Accounts and Data Lake Storage

- Understand securing Data Stores

- Understand securing Streaming Data

- Explain the monitoring capabilities that are available

- Troubleshoot common data storage issues

- Troubleshoot common data processing issues

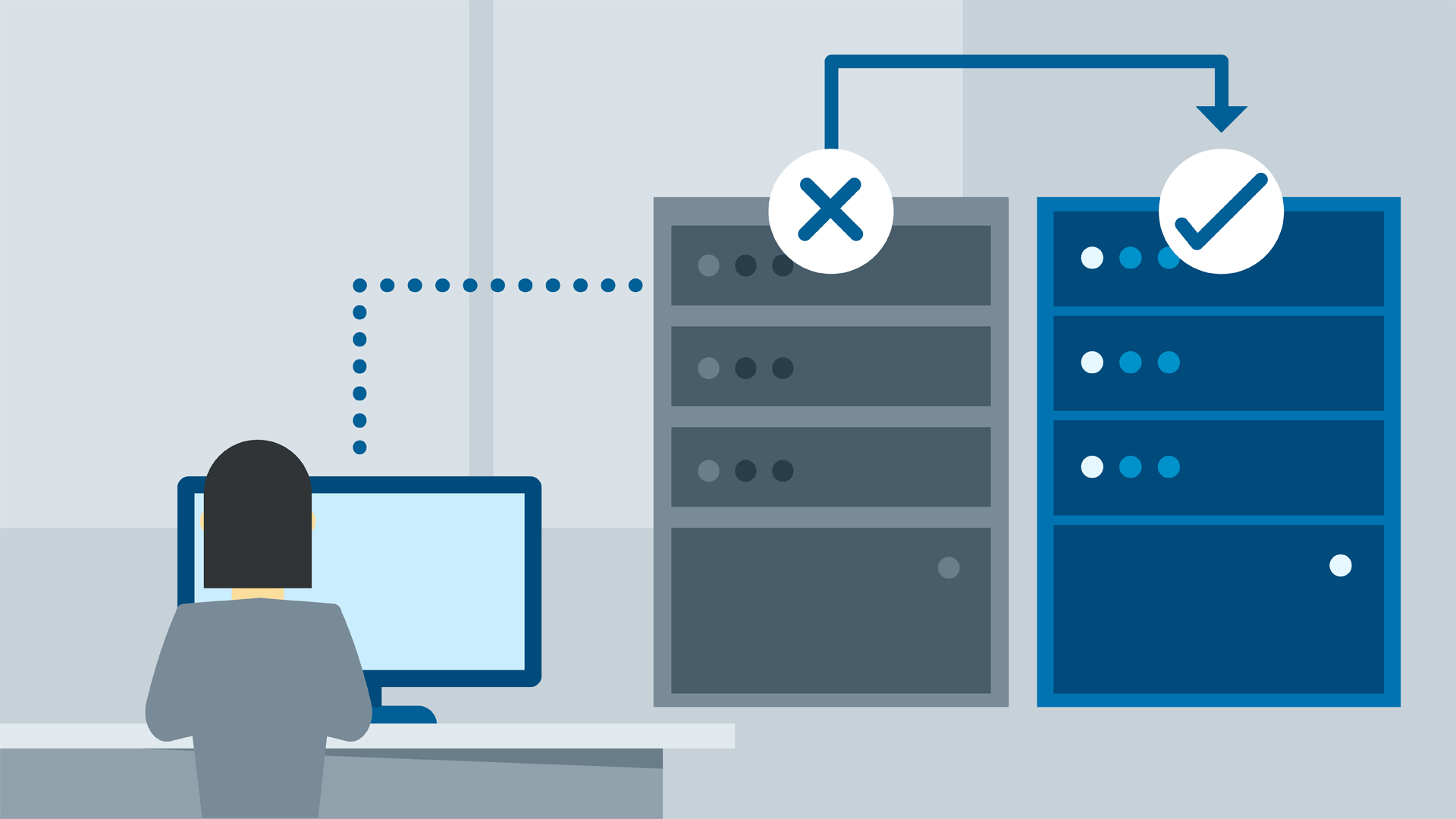

- Manage disaster recovery

Курсът е предназначен за (Audience):

- This course is designed for data scientists with existing knowledge of Python and machine learning frameworks like Scikit-Learn, PyTorch, and Tensorflow, who want to build and operate machine learning solutions in the cloud.

Формат на курса

Език на курса (Course Language Option)

Може да изберете Език на който да се проведе обучението – български или английски. Всичките ни инструктори владеят свободно английски език.

Учебни Метериали: в електронен формат (Учебните материали са на английски), включени в цената с неограничен достъп.

Лабораторна среда: всеки курсист разполага със собствена лаб среда, където се провеждат упражненията, част от курса.

Продължителност

- 3 работни дни (09:00 – 17:00)

или

- 24 уч.ч. обучение (теория и практика) в извънработно време с продължителност 2 седмици

- събота и неделя 10:00 – 14:00, 14:00 – 18:00, 18:00 – 22:00

- понеделник и сряда 19:00 – 23:00

- вторник и четвъртък 19:00 – 23:00

Плащане

Заявка за издаване на фактура се приема към момента на записването на съответния курс.

Фактура се издава в рамките на 7 дни от потвърждаване на плащането.

Предстоящи Курсове

За повече информация използвайте формата за контакт.

Ще се свържем с Вас за потвърждаване на датите.

Предпоставки (Изисквания) за Участие (Prerequisites):

Before attending this course, students must have:

- A fundamental knowledge of Microsoft Azure

- Experience of writing Python code to work with data, using libraries such as Numpy, Pandas, and Matplotlib.

- Understanding of data science; including how to prepare data, and train machine learning models using common machine learning libraries such as Scikit-Learn, PyTorch, or Tensorflow.

Курсът подготвя за следните сертификационни нива

-

Exam DP-100: Designing and Implementing a Data Science Solution on Azure

- Може да се сертифицирате в нашия тест център с ваучер с отстъпка от цената на изпит.

Curriculum

- 10 Sections

- 40 Lessons

- 10 Weeks

- Module 1: Introduction to Azure Machine LearningIn this module, you will learn how to provision an Azure Machine Learning workspace and use it to manage machine learning assets such as data, compute, model training code, logged metrics, and trained models. You will learn how to use the web-based Azure Machine Learning studio interface as well as the Azure Machine Learning SDK and developer tools like Visual Studio Code and Jupyter Notebooks to work with the assets in your workspace.4

- Module 2: No-Code Machine Learning with DesignerThis module introduces the Designer tool, a drag and drop interface for creating machine learning models without writing any code. You will learn how to create a training pipeline that encapsulates data preparation and model training, and then convert that training pipeline to an inference pipeline that can be used to predict values from new data, before finally deploying the inference pipeline as a service for client applications to consume.4

- Module 3: Running Experiments and Training ModelsIn this module, you will get started with experiments that encapsulate data processing and model training code, and use them to train machine learning models.4

- Module 4: Working with DataData is a fundamental element in any machine learning workload, so in this module, you will learn how to create and manage datastores and datasets in an Azure Machine Learning workspace, and how to use them in model training experiments.4

- Module 5: Compute ContextsOne of the key benefits of the cloud is the ability to leverage compute resources on demand, and use them to scale machine learning processes to an extent that would be infeasible on your own hardware. In this module, you'll learn how to manage experiment environments that ensure consistent runtime consistency for experiments, and how to create and use compute targets for experiment runs.4

- Module 6: Orchestrating Operations with PipelinesNow that you understand the basics of running workloads as experiments that leverage data assets and compute resources, it's time to learn how to orchestrate these workloads as pipelines of connected steps. Pipelines are key to implementing an effective Machine Learning Operationalization (ML Ops) solution in Azure, so you'll explore how to define and run them in this module.4

- Module 7: Deploying and Consuming ModelsModels are designed to help decision making through predictions, so they're only useful when deployed and available for an application to consume. In this module learn how to deploy models for real-time inferencing, and for batch inferencing.4

- Module 8: Training Optimal ModelsBy this stage of the course, you've learned the end-to-end process for training, deploying, and consuming machine learning models; but how do you ensure your model produces the best predictive outputs for your data? In this module, you'll explore how you can use hyperparameter tuning and automated machine learning to take advantage of cloud-scale compute and find the best model for your data.4

- Module 9: Interpreting ModelsMany of the decisions made by organizations and automated systems today are based on predictions made by machine learning models. It's increasingly important to be able to understand the factors that influence the predictions made by a model, and to be able to determine any unintended biases in the model's behavior. This module describes how you can interpret models to explain how feature importance determines their predictions.4

- Module 10: Monitoring ModelsAfter a model has been deployed, it's important to understand how the model is being used in production, and to detect any degradation in its effectiveness due to data drift. This module describes techniques for monitoring models and their data.4